Yansheng Li, Linlin Wang, Tingzhu Wang, Xue Yang, Junwei Luo, Qi Wang, Youming Deng, Wenbin Wang, Xian Sun, Haifeng Li, Bo Dang, Yongjun Zhang, Yi Yu, and Junchi Yan

Illustration of Scene graph generation(SGG) in large-size VHR SAI. Black arrows denote semantic relationships whose prediction only depends on isolated pairs, but red arrows denote semantic relationships that should be inferred with the aid of contexts.

RSG, the first large-scale dataset for OBD and SGG in large-size VHR SAI.

To address the dataset scarcity problem, we construct RSG, a large-scale dataset with more than 210,000 objects and over

400,000 triplets for SGG in large-size VHR SAI. In this dataset, SAI with a spatial resolution of 0.15m to 1m is collected,

covering 11 categories of complex geospatial scenarios associated closely with human activities worldwide (e.g., airports, ports,

nuclear power stations and dams). Under the guidance of experts in SAI, all objects are classified

into 48 fine-grained categories and precisely annotated with oriented bounding boxes (OBB),

and all relationships are annotated in accordance with 8 major categories including 58 fine-grained categories.

All object pairs and their contained relationships are one-to-many annotated, and all relationship annotations

are absolute (unaffected by imagery rotation). In conclusion,

RSG has significant advantages over existing OBD datasets and SGG datasets in SAI.

To the best of our knowledge, RSG is the first large-scale dataset for SGG in large-size VHR SAI.

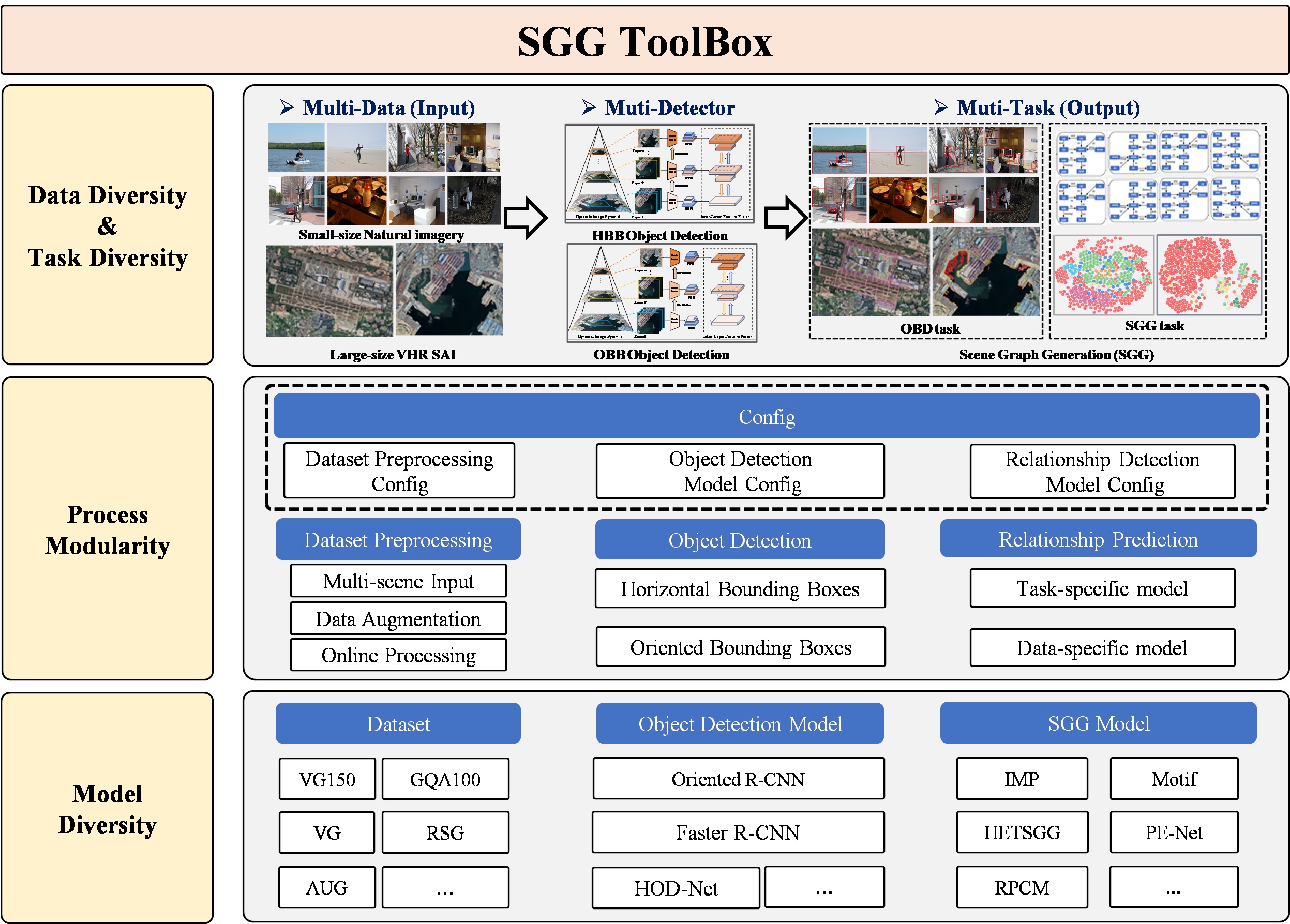

We releases a SAI-oriented SGG toolkit (https://github.com/Zhuzi24/SGG-ToolKit) with about 30 OBD methods and 10 SGG methods for large-size VHR SAI.

This work was supported by the National Natural Science Foundation of China.

If you find this work helpful for your research, please consider citing our paper and staring ⭐SAI-oriented SGG toolkit:

@article{li2024scene,

title={Scene Graph Generation in Large-Size VHR Satellite Imagery: A Large-Scale Dataset and A Context-Aware Approach},

author={Li, Yansheng and Wang, Linlin and Wang, Tingzhu and Yang, Xue and Luo, Junwei and Wang, Qi and Deng, Youming and Wang, Wenbin and Sun, Xian and Li, Haifeng and Dang, Bo and Zhang, Yongjun and Yu, Yi and Yan Junchi},

journal={arXiv preprint arXiv:2406.09410},

year={2024}}

@article{luo2024sky,

title={SkySenseGPT: A Fine-Grained Instruction Tuning Dataset and Model for Remote Sensing Vision-Language Understanding},

author={Luo, Junwei and Pang, Zhen and Zhang, Yongjun and Wang, Tingzhu and Wang, Linlin and Dang, Bo and Lao, Jiangwei and Wang, Jian and Chen, Jingdong and Tan, Yihua and Li, Yansheng},

journal={arXiv preprint arXiv:},

year={2024}}

@article{li2024learning,

title={Learning to Holistically Detect Bridges From Large-Size VHR Remote Sensing Imagery},

author={Li, Yansheng and Luo, Junwei and Zhang, Yongjun and Tan, Yihua and Yu, Jin-Gang and Bai, Song},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

volume={44},

number={11},

pages={7778--7796},

year={2024},

publisher={IEEE}}

E-mail: yansheng.li@whu.edu.cn; wangll@whu.edu.cn; tingzhu.wang@whu.edu.cn; luojunwei@whu.edu.cn